As AI voice technology becomes central to how businesses communicate, the need for faster, more scalable, and more secure infrastructure grows. Teams building large-scale applications, whether for Contact Centers, customer support, and others like healthcare communication, often rely on APIs to generate and deliver reliable voice at scale. With the advancements of recent LLM technology, this is available and possible now with the MCP server, voicing intelligent responses for professional customer interactions.

So, what exactly is an MCP server, and why should you read on if you’re building with AI voice?

What is an MCP Server?

An MCP server is a Model Context Protocol server, which acts as a control hub that allows AI tools to continuously “talk” to external systems.

MCP servers create a standardized way for large language models (LLMs) and AI platforms to exchange information with databases, APIs, or third-party tools.

Simply put, without an MCP server, you’d need to build a lot of one-off integrations every time you wanted your AI to interact with another piece of software. With the MCP server, you get a common framework that reduces processes, increases security, and streamlines the workflow.

Which is why we’re excited to unveil our new MCP server, which delivers seamless, standardized, and high-performance integration between AI voice and external systems.

To get a better understanding, watch our WellSaid MCP Server demo!

WellSaid’s new MCP Server

Embedded directly into our text-to-speech (TTS) environment, our MCP server extends the WellSaid’s reach well beyond simply generating natural-sounding audio. The server functions as both a controller and integrator, enabling developers to manage voice generation workflows using a consistent API framework.

Key features of WellSaid’s MCP Server

Installation made simple

The MCP Server has a smooth setup experience that’s accessible to developers and teams eager to quickly integrate the tool, so long as they have a WellSaid API key. With limited prerequisites, installation can be done in minutes, and teams can immediately use the tool in their MCP-compatible AI client, such as Claude.

Advanced voice controls, voice discovery, and speech generation

Once connected, your AI client can call WellSaid functions through natural prompts, such as:

- Listing available filters for voices or voice characteristics

- Ex. “Show me all available voice filtering options” or “What voice characteristics can I choose from?”

- Search for voices matching specific traits

- Ex. “Use a confident, professional female voice with a US accent”

- Modify pitch, change speaking speed, control loudness of voice, or apply respellings to override pronunciations

- Ex. “Speed it up, put more emphasis on the word Subjective. Pronounce WellSaid as ::WEL-sed::”

- Convert text into speech with a selected voice

- Ex. “Generate speech for ‘Welcome to our training program’ using speaker ID 145”

- Generate multi-speaker or long-form audio

- Ex: “Create a dialogue where Speaker 89 says ‘Hi Robert, I see you’re calling from Michigan. Can I have the last four digits of your phone number?’ and Speaker 76 replies ‘Hi, yes, it’s 9889’”

Ways to use WellSaid’s MCP Server

The WellSaid MCP Server isn’t just about calling APIs; it’s about enabling real-world, production-ready voice scenarios with natural prompts and flexible controls. Some ways to use the MCP Server include:

Dynamic training content

eLearning modules need to be clear, steady, and easy to follow. With the MCP Server, a developer or instructional designer can:

- Search for a clear, informative voice suited for training

- Apply a slower tempo (ex. -50) so learners can absorb complex concepts

- Quickly generate informative, educational, and highly accessible audio without the need for manual audio editing

- Make your training content dynamic. Customize the learning experience by user type and when users don’t pass comprehension quizzes, have different ways to teach the content they didn’t quite understand.

Dynamic advertising and marketing

Marketing teams often need bright, energetic voices to grab attention. With the MCP Server, they can:

- Select any style of professional voices from the library

- Increase pitch, pace, pausing, and loudness slightly (ex., +50) to make the delivery feel more energetic

- A/B test your ads and user-facing content faster and with less money than traditional A/B testing methods

- Generate natural-sounding, dynamic audio scripts that are targeted for your customer base.

- “Introducing the all-new SmartHome 2.0—smarter, faster, and designed for you!”

Character dialogue

Interactive content for audiobooks, games, podcasting, or training simulations often need multiple characters. The MCP Server makes it easy for teams to:

- Choose two distinct voices with contrasting tones

- Script a short conversation

- Insert short, natural pauses between lines for a more lifelike exchange

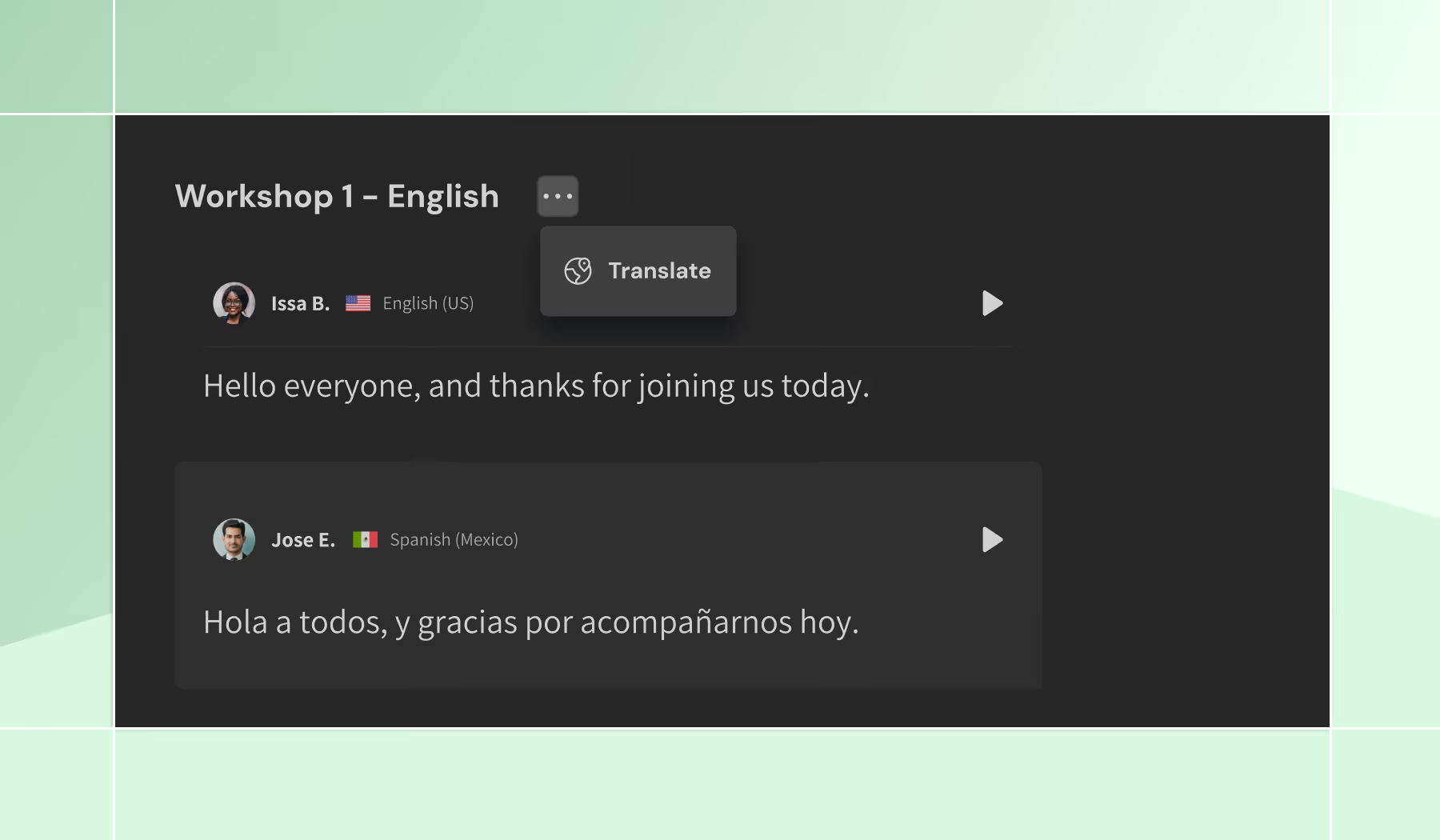

Multilingual experiences

Global teams can deliver the same message in multiple languages with a single workflow. They can:

- Search for voices in specific languages and dialects

- Generate the same script in each language needed

All in all

The WellSaid MCP Server takes the complexity out of voice integration and replaces it with simplicity, consistency, and scale. With just a few natural prompts, teams can create expressive, multilingual, and character-driven audio that’s ready for real-world deployment. This is a significant step forward—not only for WellSaid, but for every organization looking to integrate voice into their workflows with speed, creativity, and enterprise-grade reliability.

Try our free two-week trial now!

Step-by-step instructions can be found in our API Docs here.

.jpg)

.avif)