Most AI voices can pass a quick test. Play a short clip, and they sound close enough to human.

But sounding close isn’t enough, especially if you’re looking for the most realistic AI voice generator for real content.

Real content isn’t a 10-second clip. It’s a product walkthrough, a training module, or a multi-minute narration that needs to hold attention from start to finish.

That’s where most text-to-speech tools start to break down.

You hear it in subtle ways:

- Pacing that feels slightly off

- Pauses that don’t land naturally

- Mispronounced names or technical terms

- Delivery that shifts from one section to the next

On their own, these issues seem minor. Over time, they make content harder to follow and less credible.

Realism matters because the voice carries the message. When it sounds natural, people stay focused on what you’re saying.

The goal is to create something that holds up across real content and real workflows, not something that only sounds good in isolation.

What realistic AI voice actually means

“Realistic” gets used a lot, but it’s rarely defined in a practical way.

In practice, realism shows up in four areas:

- Natural pacing and conversational rhythm: The voice moves at the right speed, with pauses and flow that feel natural.

- Clear, consistent pronunciation: Names, acronyms, and technical terms are handled correctly every time.

- Tone that matches intent: A training script sounds steady and clear. A marketing message carries more energy. The delivery fits the content.

- Stability across long-form and repeated use: The voice holds steady across full scripts and doesn’t drift as content evolves.

This is what separates tools that sound good in isolation from those that can actually be considered the most realistic AI voice generator in practice.

How WellSaid produces more natural text-to-speech

WellSaid is built around that definition of realism. The focus isn’t speed alone. It’s usable output from the first pass.

At the model level, that shows up in a few key ways:

Models trained for clarity and stability: WellSaid’s voice models are tuned for real production use, where consistency matters across longer scripts and repeated updates.

Built-in handling of pacing and emphasis: The system places pauses naturally, adjusts timing, and emphasizes the right words without requiring manual tweaks.

Accurate pronunciation for real-world language: Brand names, industry terms, and acronyms are handled reliably, so you don’t have to rewrite scripts to fit the model.

Consistent delivery across full scripts: Tone, pacing, and pronunciation stay aligned from start to finish, even across longer narration.

WellSaid also gives teams control over how scripts translate into speech.

Features like Rewrite for Speech and Emphasis help refine phrasing, tone, and delivery directly in WellSaid Studio. Instead of working around awkward output, teams can shape how the voice performs before generating audio.

The result is less time fixing output and more time producing finished content.

The role of real voice actors

Realism doesn’t start with the model. It starts with the voice itself.

WellSaid builds its library using professional voice actors. These are trained voices designed for clarity, tone control, and long-form listenability.

That shows up in a few important ways:

- Clear delivery — every word is easy to understand, even in longer scripts

- Controlled tone — the voice stays steady without overcompensating

- Consistent listening experience — it holds up over time

These voices are selected for repeat use. The same AI voice can carry across training content, marketing videos, and product experiences without constant adjustment.

The quality of the source voice directly shapes the quality of the AI voice generator’s output.

Why WellSaid’s AI voice holds up in real workflows

Many text-to-speech platforms sound promising until you try to use them in production.

You generate audio, then spend time adjusting pacing, fixing phrasing, or reworking sections that didn’t land right. The problem goes beyond the output. Voice quality varies, and control over how AI-generated voices perform across real content is limited.

WellSaid is designed so that the first version is usable.

- Scripts sound right on the first pass

- Fewer retakes and manual edits

- Works across short- and long-form content

- Stays aligned when you update or extend content

For example, Microsoft’s internal enablement team uses WellSaid to scale training across global teams without adding production overhead. Narration that once slowed things down now takes minutes, not hours, and updates no longer create bottlenecks.

That shift allows teams to focus on content quality instead of managing voice production.

As content scales, maintaining consistent delivery becomes more difficult — especially across formats like LMS modules, YouTube videos, TikTok videos, and Instagram Reels.

Tools that standardize pacing and structure help reduce that complexity. Features like Apply Cues Across Sections keep delivery aligned across longer scripts, while Combine Clips turns structured sections into a single, production-ready file.

Voice generation becomes a reliable production workflow when teams can maintain consistency without adding extra steps.

Where realism drives real impact

When a voice sounds natural, people focus on the content instead of the delivery.

This shift improves how content performs across use cases.

Training and enablement: Clear delivery improves comprehension and retention, especially within LMS platforms where consistency matters.

Marketing and video content: Teams can create human-like voiceovers for everything from long-form campaigns to short-form content like YouTube videos, TikTok videos, and Instagram Reels. Strong voice quality supports faster production without sacrificing clarity.

Product and UX experiences: Natural delivery improves the overall user experience and helps interactions feel more intentional.

Internal communications: Messaging stays consistent across onboarding, updates, and announcements.

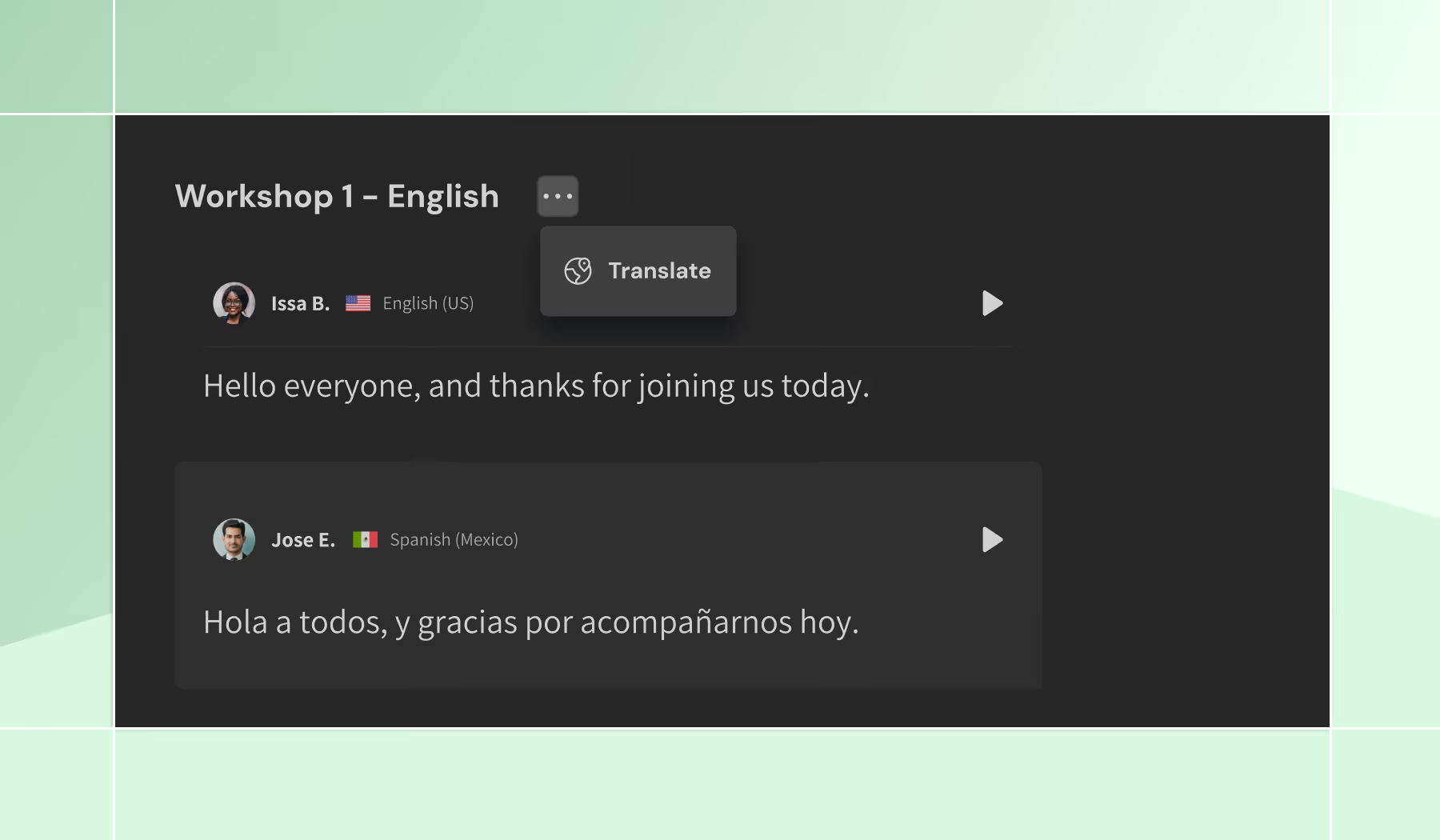

For teams working across regions, multilingual content can be managed within the same workflow, maintaining consistent delivery and narration styles across languages.

Studio: where realism meets control

Realistic output only matters if you can control it.

Many text-to-speech tools get close, but making precise changes often means regenerating sections or exporting audio into tools like Adobe Premiere Pro or Adobe Express to finish the job.

WellSaid Studio removes that friction and gives teams more control over how content is created, refined, and finalized.

Edit and hear changes in real time

Update your script and hear the result immediately, so you can test phrasing and finalize content without slowing down production.

Refine delivery without re-recording

Make targeted updates to words, sentences, or sections while keeping the rest of the audio consistent. This makes it easier to iterate on content without restarting the process.

Control pacing with precision

Timing shapes how natural audio feels.

Studio lets you control pauses between sections directly, so you can shape flow without relying on punctuation workarounds or post-editing. Continuous playback reflects the final output, so there are no surprises at export.

Maintain consistency across longer scripts

For structured content, delivery can drift.

Studio applies cues across sections so pacing and tone stay aligned from start to finish. This reduces review time and helps teams maintain consistency as content evolves.

Work within a unified production environment

Create, edit, combine, and export audio in one place. Teams can move from draft to final output without switching tools or breaking their workflow.

Scale across languages without breaking your workflow

Translate scripts and generate multilingual voiceovers directly in Studio. Each version stays structured and editable, so updates remain manageable over time.

Realistic audio becomes usable when teams can refine, update, and scale it without adding friction to the process.

The AI voice purpose-built for enterprise use

Realism alone isn’t enough. Teams also need confidence that they can use AI voice in production without risk.

WellSaid is built for that level of reliability.

- Full commercial usage rights: Every voice is sourced with clear, contractual rights, so you can use your output across marketing, training, and product content without uncertainty.

- Enterprise-grade platforms and compliance: WellSaid meets SOC 2 and GDPR standards, making it easier to adopt within enterprise environments.

- Responsible voice sourcing and safeguards: Voices are created through consent-based partnerships with professional actors, reducing risk and supporting responsible use.

Features like a built-in voice editor, customizable pronunciation rules, and phoneme-level control give teams more precision over delivery—especially for technical or regulated content.

How WellSaid compares to other AI voice tools

Many text-to-speech tools can produce something that sounds good at first listen.

The difference shows up when you use them in real content creation workflows.

Platforms like Murf AI and ElevenLabs are often used for quick outputs or experimentation, especially for short clips or AI-generated videos. As scripts get longer and more complex, issues start to surface:

- Delivery becomes less consistent

- Tone flattens over time

- Pronunciation varies across sections

- Edits require manual fixes or external tools

These limitations affect the overall user experience, especially for teams working at scale.

WellSaid is designed to handle longer scripts and repeated updates without breaking consistency.

- Delivery holds steady across full scripts

- Pacing and emphasis align with the content

- Output stays consistent across updates and projects

Models trained for stability and clarity support this consistency, helping teams produce reliable output without constant adjustment.

The result is a studio-quality AI voice that holds up in real workflows.

Realism that works in practice

Realism comes from a combination of model design, high-quality voice data, and consistent performance across real content.

If you’re looking for the most realistic AI voice generator, the difference shows up in how well the voice holds up once you move beyond short clips and into real workflows.

WellSaid is built for production environments where content evolves, updates happen, and consistency matters.

The best way to evaluate it is with your own script. Try WellSaid and see how it performs in practice.

FAQs

How do realistic AI voices compare to traditional voice recordings?

Realistic AI voices offer similar clarity and consistency without the delays of recording sessions. Teams can update content instantly instead of re-recording audio. This makes them more flexible for ongoing content creation.

How does WellSaid handle pronunciation and accuracy for complex terms?

WellSaid uses pronunciation rules and phoneme-level control to ensure accuracy across scripts. This helps maintain consistency for brand terms and technical language. Teams can also align pronunciation with standards like the Oxford Dictionary.

Can AI voice replace audio editing tools in production workflows?

AI voice reduces the need for traditional audio editing by allowing changes directly in the script. Features like pause control and real-time playback eliminate many post-production steps. Most teams still use editing tools for final polish, but far less often.

Is AI voice safe to use for enterprise and regulated industries?

WellSaid supports SOC 2 controls and GDPR compliance, making it suitable for enterprise use. These standards help teams meet requirements for security and regulatory audits. Safeguards also help address risks like deepfake generation and support responsible content moderation.

How do AI voices adapt to different narrative styles and content types?

AI voices can adjust to different narrative styles through voice selection and delivery controls. Teams can fine-tune tone, pacing, and emphasis to match the content. This helps maintain a consistent experience across formats while improving the overall user experience.

.avif)

.avif)

.avif)